Artificial intelligence is evolving incredibly fast, and every few months a new model changes what we expect from AI assistants. One of the latest updates making waves in the industry is Grok 4.1, the newest version of the Grok AI model developed by xAI, the artificial intelligence company founded by Elon Musk.

This guide — Grok 4.1 Explained — breaks down what the model does, what makes it different from earlier versions, and why it’s getting attention from both everyday users and developers.

More importantly, Grok 4.1 introduces several powerful upgrades including a 2-million token context window, improved reasoning modes, and support for modern AI agent frameworks. Combined with training infrastructure like the massive Colossus supercomputer cluster, the model is designed to compete with leading systems from companies like OpenAI, Google DeepMind, and Anthropic.

What Is Grok?

Grok is a large language model (LLM) created by xAI and designed for conversational AI, automation tools, and real-time information analysis. The model is closely integrated with the social platform X (formerly Twitter), allowing users to ask questions and analyze trending discussions directly inside the platform.

Like other modern AI chatbots, Grok can generate text, write code, summarize documents, answer questions, and assist with creative tasks. However, the system is known for having a more conversational personality compared to traditional AI assistants.

According to the official research updates published on the xAI news and research portal, Grok models are continuously improved using reinforcement learning and large-scale training data.

Grok 4.1 Explained: What Changed?

The release of Grok 4.1 focuses on three major areas:

- Improved reasoning capabilities

- Much larger context window

- Better real-world usability for developers and everyday users

In internal testing, human evaluators preferred responses from Grok 4.1 roughly 64.7% of the time compared with earlier versions, according to reporting from NewsBytes technology coverage.

This improvement suggests the model isn’t just bigger — it’s actually more helpful in day-to-day conversations.

Key Features of Grok 4.1

1. Massive 2-Million Token Context Window

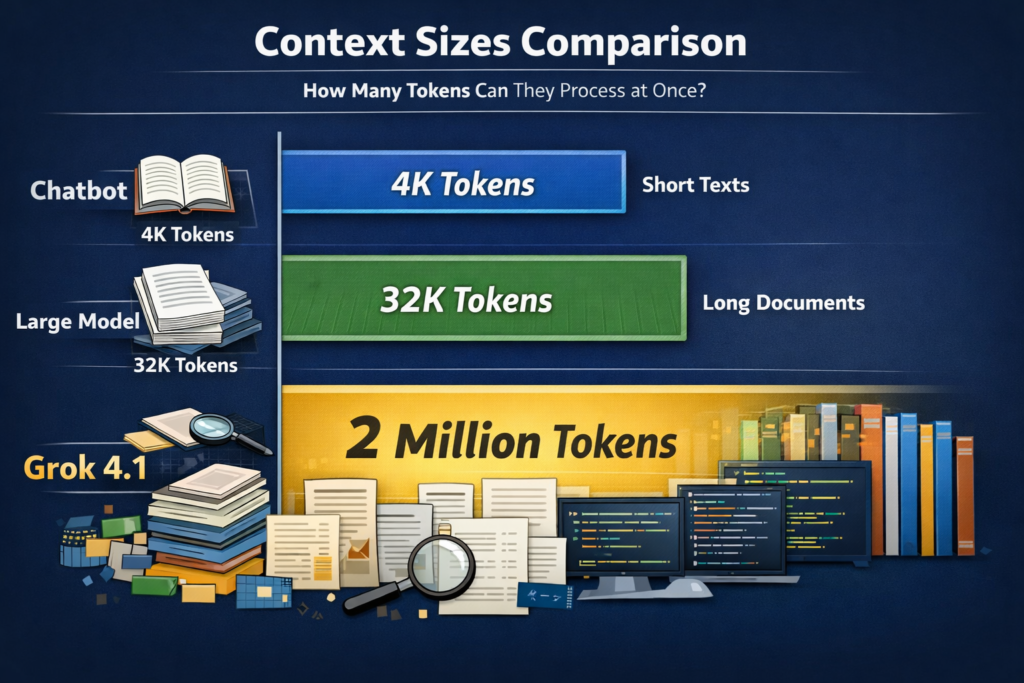

One of the most important upgrades in Grok 4.1 is its 2-million token context window.

In simple terms, this means the AI can process extremely large amounts of text in a single prompt — potentially hundreds of pages of documents, code repositories, or long research papers at once.

Large context windows are becoming a key battleground in AI development because they allow models to analyze complex data sets without losing track of earlier information.

For example, developers could upload an entire codebase and ask Grok to identify bugs or performance issues across multiple files.

2. Thinking Mode vs Non-Thinking Mode

Another important part of Grok 4.1 Explained is its dual operating modes.

Thinking Mode

Thinking Mode allows the AI to internally reason through problems before generating a response.

- better analytical reasoning

- stronger research capability

- improved problem solving

Non-Thinking Mode

This mode produces answers faster by skipping extended reasoning steps.

- faster responses

- lower computational cost

- better for casual questions

This flexibility allows developers to choose between speed and reasoning depth depending on the task.

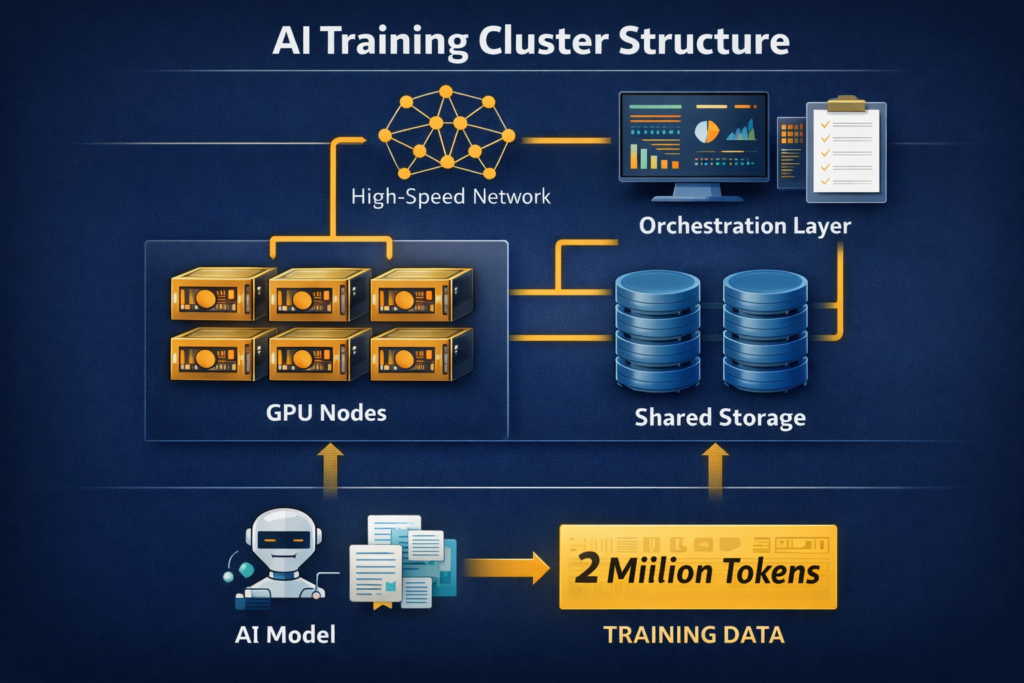

3. Training on the Colossus Supercomputer Cluster

A major authority signal behind Grok’s performance is its training infrastructure.

The model was trained using Colossus, an extremely large AI supercomputer cluster built by xAI. The system uses tens of thousands of GPUs working together to train advanced machine learning models.

Large-scale computing infrastructure like Colossus allows AI developers to train models faster and experiment with larger neural networks.

4. Model Context Protocol (MCP) Support

Another major technical improvement is support for the Model Context Protocol (MCP).

MCP is a framework that allows AI models to connect with tools, data sources, and autonomous AI agents. This makes Grok more compatible with modern AI automation workflows.

For developers building agent-based systems, MCP support allows Grok to work with databases, APIs, and external services more efficiently.

5. Strong Benchmark Performance

Grok 4.1 performs strongly on several AI benchmarks. In the LMArena leaderboard, the model achieved a rating around 1483 Elo, according to benchmark analysis reported by NewsBytes.

Elo scores measure how frequently users prefer one AI model over another in blind comparison tests.

Technical Specifications

| Feature | Grok 4.1 Specification |

|---|---|

| Context Window | Up to 2 Million Tokens |

| Benchmark Score | ~1483 Elo (LMArena) |

| Reasoning Modes | Thinking Mode & Non-Thinking Mode |

| Agent Support | Model Context Protocol (MCP) |

| Estimated API Cost | Approx. $0.20 per 1M tokens (input) |

| Training Infrastructure | Colossus AI Supercomputer Cluster |

Real-World Use Cases

Content Creation

Writers, marketers, and creators can use Grok to draft blog posts, marketing copy, product descriptions, and social media content.

Coding Assistance

Developers increasingly use AI assistants to debug code and explain complex algorithms.

For example, during testing scenarios described in AI developer discussions, Grok 4.1 successfully identified a database race condition — a bug where multiple processes attempt to update the same resource simultaneously — and suggested a fix using transactional locking. Problems like this are often difficult for traditional debugging tools to identify quickly.

Real-Time Social Media Analysis

Because Grok integrates with X, it can analyze trending topics and summarize large public conversations in real time.

Pros and Cons of Grok 4.1

Pros

- Huge 2M token context window

- Strong reasoning capability

- Flexible thinking modes

- Real-time social data integration

- Support for AI agent frameworks

Cons

- Developer ecosystem still growing

- Safety policies still evolving

- Some advanced features require paid access

Grok 4.1 vs Other AI Models

| Model | Developer | Key Strength |

|---|---|---|

| GPT-4 Series | OpenAI | Strong reasoning and ecosystem |

| Gemini | Integration with Google products | |

| Claude | Anthropic | Long context and safety focus |

| Grok 4.1 | xAI | Real-time data and personality-driven AI |

Frequently Asked Questions

Grok 4.1 is an advanced AI language model created by xAI that introduces a massive 2M token context window, improved reasoning modes, and better conversational performance.

Grok is available primarily through the X platform and through developer APIs released by xAI.

It allows the model to analyze huge amounts of information at once, including entire documents, research datasets, or software codebases.

Conclusion

Understanding Grok 4.1 Explained shows that the new version represents a significant step forward in AI development.

The model combines powerful infrastructure, such as the Colossus AI cluster, with practical upgrades like a 2-million token context window, flexible reasoning modes, and improved conversational ability.

As competition intensifies between companies like xAI, OpenAI, and Google DeepMind, models like Grok 4.1 show how AI assistants are evolving from simple chatbots into powerful tools for research, programming, and everyday productivity.

Visit For More: AiMagHub.com